Why You Should and How You Can Move Away from Existing DLP Programs

BUSINESS DATA PROTECTION NEED IS GREATER THAN EVER Data breaches continue to expose personally identifiable information (PII), intellectual property (IP), and other sensitive data at an alarming sc...

Why You Should and How You Can Move Away from Existing DLP Programs

BUSINESS DATA PROTECTION NEED IS GREATER THAN EVER Data breaches continue to expose personally identifiable information (PII), intellectual property (IP), and other sensitive data at an alarming sc...

BUSINESS DATA PROTECTION NEED IS GREATER THAN EVER

Data breaches continue to expose personally identifiable information (PII), intellectual property (IP), and other sensitive data at an alarming scale. Human error and credential theft are often involved; 82% of data breaches involve the human element and 61% involve credentials, meaning that intentional and unintentional data loss by both malicious and well-meaning employees is a predominant cause of a breach in addition to the malicious data exfiltration conducted by external cybercriminals. All of these trends must be tactically addressed as part of an overall data protection strategy. Data breaches are costly events that carry lingering consequences.

WHAT'S THE COST OF A DATA BREACH?

The average cost of a data breach increased 2.6% from USD 4.24 million in 2021 to USD 4.35 million in 2022. The consequences of a breach affecting PII and IP can be very serious, and include direct loss of revenue, diminished reputation and effect on customer trust, noncompliance fines, class action lawsuits, loss of competitive advantage, operational downtime, and employee turnover, especially at the executive level. Many companies underestimate the effect a breach can have on reputation, but their customers' perception can be severely impacted by a business’ data breach, with 69% of respondents in a 2019 survey claiming they would avoid a company that had suffered a data breach, and 29% of them claiming would never visit that business again.

Data protection solutions are therefore, more than ever, vital security controls to protect the reputation and the business continuity of every organisation. Recent forecasts on information security estimate the growth rate for enterprise data loss prevention at 6.6% in 2022 with cloud data protection growing at a higher double-digit rate, as the number doesn’t even include the massive adoption of DLP capabilities from integrated DLP services or cloud-native service providers.

MODERN BUSINESS TRENDS EXPOSE DATA IN NEW WAYS

The emergence of the hybrid workforce—where employees have the flexibility to work freely between corporate offices, branch offices, home, or on the road—has rapidly changed the way business is done. In order to sustain this highly distributed enterprise model, which accelerated rapidly during the onset of the COVID-19 pandemic, organisations have increasingly embraced a large number of cloud-based solutions. In fact, cloud apps have become an instrumental means to keep all employees easily connected, foster business collaborations, and conveniently share data. The worldwide end-user spending on public cloud services is forecast to grow 20.4% in 2022. In this scenario, organizations are facing new and unexpected data protection challenges as their sensitive data, such as personally identifiable information (PII) and intellectual property (IP), is moving outside of the traditional corporate premises, and is more exposed in the cloud and across the mobile workforce to newer threat vectors. Over the years, data has also evolved significantly, growing in volume, variety, and velocity. Sensitive information can be embedded in structured files and data types, unstructured formats like images and screenshots, and even flow through asynchronous communications on email messages and collaboration apps like Slack and Teams. As a result, sensitive data is harder to identify, and therefore, to protect. Data is harder to track and protect, more vulnerable to theft, and prone to both intentional and unintentional exposure. Data protection technologies have tried to evolve accordingly but it is hard for most DLP vendors to keep up with the rapid changes of our modern times.

TRADITIONAL DLP SOLUTIONS

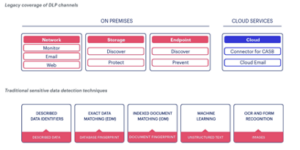

Traditional DLP solutions have protected organisations from the risks of data loss successfully for over 10 years. Originally designed to solve for a small variety of conventional data loss use cases, their fundamental mission was to discover sensitive data and prevent them from leaving the organisation’s boundaries: an employee’s device, a physical file repository like a file server or NAS, a data centre’s network, etc. Some DLP solutions have been able to expand gradually, with update after update over the years, to cover newer compelling use cases and new network environments, including endpoint off networks, larger and larger storage repositories, server clustering for high availability, and more network egress points via proxy and email MTA. Before the cloud era, those solutions reached a high level of sophistication monitoring data egress points on-premises and still today not many alternative solutions can claim the same comprehensive coverage of data channels and the maturity of the traditional DLP’s controls.

But the cloud has changed everything. Back when DLP solutions were initially conceived, their architecture was conveniently layered on-premises on the network infrastructure of that time. DLPs are traditionally software-based solutions that need server machines, hardware proxies, ICAP connections, local agents, on-premises databases, on-premises management interfaces, and other components in order to work. Their complexity has intensified over time, requiring multiple detection servers to solve for data at-rest, in-use, in-motion, larger databases, increased computing scale, and a costly bolt-on approach to expand to new environments. And such on-premises architecture was likely to be replicated for each branch office. In cloud-enabled environments, the protection perimeter is fundamentally the data itself. The “perimeter” now extends beyond legacy network constructs and legacy modes of approaching data protection. Data travels everywhere and can be accessed from seemingly anywhere a user or a device wishes to connect to it. With legacy DLP deployments, many organizations have invested great amounts of time and money. They have gone through several growth stages, have spent years setting up and fine-tuning their data protection policies and configurations, have extended their policies to newer channels, added new compliance requirements, created complex network configurations, and their practitioners have become technology experts. Now these organizations are realizing that years ago what might have been an architectural masterpiece is no longer sustainable. Moreover, it’s becoming too obvious that traditional DLP cannot solve most of today’s use cases, as data is massively used and stored across cloud services and shared across devices outside the managed premises. But because these technologies are too anchored by their on-premises deployments, they are very sticky. It is not easy to replace them with something modern that would possibly work better, at least not without security downtime and a lot of work by already-stretched teams. The reputation of traditional DLP has drastically diminished. Legacy DLP solutions are now seen as extremely complex to deploy and maintain, requiring complicated programs and no longer effective as the needs have evolved. It’s known that with complexity comes liability, high operational costs, maintenance, and training expenses. Another perception is that DLPs can only be used by very large enterprises, because only large organisations have the bandwidth and the budgets to afford their total cost of ownership (TCO). This is a challenging paradox for most enterprises: Data protection is increasingly needed, and the DLP adoption is growing at a fast pace, but traditional DLP is no longer loved.

SHORTCOMINGS OF EXISTING DLP SOLUTIONS

Digital transformation and increased cloud adoption have created several challenges for traditional DLP solutions that have become bigger and bigger until reaching a failpoint. Besides the architectural and operational complexity of traditional DLP, practitioners also realize that there are use cases that cannot be obviously solved and environments that cannot be covered by the existing tools. In fact, with an overwhelming number of devices today being mobile and unequipped with any DLP, with an ever increasing number of cloud apps (35% increase in number of apps in use in 2022), and with data no longer sitting in an on-premises data center, the paradigm has shifted, making old DLP go blind fast.

There are three main reasons for these shortcomings:

- Cloud and hybrid work, including SaaS, IaaS, and PaaS. Architected as on-premises solutions and anchored by their on-premises infrastructure (i.e., proxy appliances, multiple detection servers, on-premises databases, etc.), traditional DLPs don’t really extend to cloud channels. A way around for data discovery in the cloud initially was partially solved by the DLP vendors through ICAP integration with CASB solutions creating the first big architectural limitations: disjointed environments, hard-to-reconcile policies, different enforcements, separate consoles, and considerable latency to enforce protections. Another approach was tried via cloud detection services and REST API connectors, as a way to connect the on-premises DLP enforcement and CASB, but this method only patched some of the problems and did not provide a real long-term solution. Cloud adoption has increased exponentially, and so have the use cases and risks to data.

- Hybrid work has left organizations with this huge burden of having to deal with a DLP infrastructure that has become massive, extensive, and sticky, tight to on-premises dependencies and hardware components like proxies, databases, servers, etc.Coverage for highly distributed enterprises has become a nightmare because most likely the on-premises architecture must be replicated for each branch office. Most importantly, the legacy approach lacks coverage for remote employees connecting to corporate resources and risky cloud apps, for the unmanaged personal BYO devices that must also connect to corporate assets, and even for IoT devices accessing sensitive data from anywhere.

- Big data and increased computational requirements for DLP. Over the years, data has evolved significantly, booming not just in volume but also in variety and velocity. Sensitive information can be embedded in more unstructured formats like images and screenshots, stored and shared in the cloud, and even flow through asynchronous communications on email messages and collaboration apps like Slack and Teams. As a result, sensitive data is increasingly harder to identify, and therefore, to protect.These solutions don’t scale at cloud speed and they can hardly keep up with newer use cases, data privacy laws, and newer regulatory requirements. They can’t ingest and process larger and larger amounts of information or leverage sophisticated machine learning and AI models, not without adding more detection servers, larger databases and voluminous endpoint agents, and slowing down other computational processes of the organization. Therefore many use cases will stay unsolved such as: advanced image recognition, correlation of context-based information from many risk vectors, advanced endpoint-based detection, fingerprinting of large files and datasets, etc. In addition, software updates for traditional DLP solutions are a complete nightmare because they notoriously take months or even years and a lot of manual work to go from one version to the next, not taking into account possible system errors and irremediable loss of data and configurations. Organizations are typically behind DLP version upgrades and therefore are not using newer protections (newer data identifiers, newer detection methods, newer compliance policies, etc.) because of the lengthy and resource-intensive updates that they have to go through.

- Too many false positives and no zero trust design. With data residing and moving to more environments outside the managed data center network and with the amount of data constantly growing, the number of incidents has grown to a point that it is now nearly impossible for the incident response team to triage and remediate every incident with the right level of analysis and understanding. A massive number of false positives flood incident response teams—thousands or hundreds of thousands of alerts per day that would demand direct attention, but that have to be overlooked for lack of time and bandwidth. Incident response teams have expanded accordingly at a high cost.Automation and orchestration tools like UEBA have come to assist, ingest alerts, and figure out a more optimized way to remediate them in bulk. UEBA is an effective tool in symbiosis with DLP, but the UEBA model is not sustainable if DLP becomes more and more inaccurate and its gaps larger.DLP must shift into a fully integrated zero trust data protection platform, able to ingest and use information from any security source and translate them into actionable policy recommendations and intelligent incident response rules.

THE NEED FOR CHANGE

Digital transformation has fundamentally shifted the ways organizations deliver value and drive revenue. It has forced them to rethink and redesign customer experience delivery and to reconceive products and services. Today, in a cloud-enabled world, we must align data security initiatives to these changes and innovate continuously at cloud speed. Corporate business initiatives suffer when security teams fail to adapt to these changes and adopt the modern architectural and operational models to facilitate them. It’s a no-brainer that the DLP architecture must change in order to adapt to the modern hybrid work world and be future-proof. DLP has to be delivered from the cloud to ensure broad coverage, high efficiency, great scalability, and unlimited computing power. It also needs to provide a high degree of efficacy to guarantee accurate data protection against every data loss risk. For example, SaaS applications have replaced many on-premises applications because they are able to deliver great advantages to organizations as they go through the modern digital transformation and embrace hybrid operational models. SaaS apps keep their users always connected, help store more data, make resources available anytime and from everywhere, make business transactions and customer services efficient, easier and faster, and they scale rapidly to cover additional branches and employees. They fundamentally solve complicated problems more easily. In the same way, data protection offers great advantages when it’s delivered from the cloud.

The most relevant bundles include:

- Comprehensiveness of coverage beyond networks—cloud-delivered data protection means that data protection can easily extend beyond the corporate boundaries, to cloud services and users anywhere. It can discover and protect data across every channel, on-premises and in the cloud, everywhere data flows and anywhere it’s stored. This model doesn’t require additional infrastructure but can leverage existing control points such as SaaS APIs, cloud security gateways, endpoint clients, etc.

- Infinite computational scale to anticipate new needs and unlimited levels of sophistication— data scanning and detection algorithms can run in the cloud, eliminating the burden on the network computing infrastructure and voluminous endpoint agents. They can ingest and process large amounts of information and context to make smart decisions automatically based on rich risk context. They can finally deploy sophisticated machine learning and AI data identification models to detect sensitive data with a high degree of accuracy and solve for more challenging use cases. This provides a higher degree of detection accuracy, minimizes false positives, and automates incident remediation workflows.

- Risk and context awareness for high data protection efficacy—A cloud-delivered data protection platform can be easily integrated across the security and networking infrastructure and within cloud services, sharing intelligence and continuously gathering risk context. It has the ability to collect great amounts of logs and information available from other security and infrastructure sources like cloud security tools, SaaS security posture management, cloud security posture management, network security, endpoint security and posture, identity, user behavior analytics, security orchestration, etc. Such a vast pool of information can be leveraged to identify sensitive data accurately and, most importantly, to determine the best type of response to data security incidents and violations based on risks, situations, instances, locations, behaviour, reputation, and other factors. Security context makes DLP risk aware with continuous and adaptive assessments.

- Lower cost of ownership, ease of deployment, and maintenance and scale—A cloud-delivered architecture is not tight to an infrastructure. It can be modeled to the cloud, meaning always staying up to date, with always-on protections and latest updates available in real time everywhere the service is deployed.

NEW CLOUD DLP PLATFORMS SOLVE MODERN USE CASES BUT NOT ALL OF THEM ARE MATURE YET

Today a few security vendors have taken the opportunity to drive the change and fill the modern data protection needs that are left unresolved by legacy solutions.

New cloud-delivered DLP solutions provide more visibility in the cloud and beyond the corporate premises, and they scale with less friction. The old bolt-on DLP approach made of multiple detection servers used to cover different environments (web, email, at-rest discover, cloud CASB, etc.) has been finally replaced by a single data protection brain in the cloud for consistency of policy enforcement everywhere data is and for better system manageability. ML and AI can now be finally delivered at full capacity leveraging the highly scalable computational power of the cloud. Cloud DLP solutions also have the capacity to ingest and triangulate more information from different sources, including application risks, network risks, device postures, user behaviors, etc. This is transformative. But not all the new DLP solutions out there are the same, and indeed most of them have not reached the right level of maturity, the detection accuracy, and the sophistication of controls required to take on the heritage of legacy DLP solutions and to be practical. In fact, some vendors only recently have started offering integrated DLP capabilities in their core products, hoping that aggressive marketing would be enough to convince buyers on products that actually lack breadth and depth.

Two dominant models have emerged:

- Integrated DLP solutions, which include DLP capabilities as part of a web gateway, CASB, or NGFW, and broadly described as being part of SASE platforms, delivered from the cloud and integrated within a network security appliance or a service.

- Cloud-native DLP solutions. Today many cloud service providers (CSPs) and SaaS vendors offer native DLP capabilities. These are cloud-focused solutions, readily available that are increasingly chosen by organisations pursuing a cloud-first strategy or those that are beginning their data protection journey.

Data protection must be undoubtedly delivered from the cloud to be practical in modern times. In addition, practitioners know very well that a data protection program also needs feature maturity and a vendor’s full dedication and expertise in order to succeed. In fact, “good enough” capabilities can produce inaccuracy, partial detection, and most importantly, a lot more false positives than an organization is capable of handling. The fact that they are integrated solutions most of the time means they are just bundled services, “included” but not architected to actually consume logs and contextual information from other controls. Therefore, their incident response actions stay inaccurate because they lack awareness of business context and risks. This is a data protection strategy destined to fail. In the next section of this paper we will explore the reasons why only Netskope DLP provides a high level of sophistication and a superior solution needed for a data protection program to succeed, thanks to the vendor’s full dedication to data protection and its continuous innovations over the past decade. Netskope is not just another SWG/NGFW/CASB vendor adding a DLP capability to their core product. Netskope is the data protection vendor of choice in modern times.

ZERO TRUST DATA PROTECTION

We believe that the implementation of a data protection program should not hinder user experience or be an obstacle to business productivity. Data protection should be at the core of an organization’s zero trust initiatives. Judiciously applying zero trust means that we must go beyond merely discovering sensitive information and controlling who has access to it, and factor in the continuous, realtime access and policy controls that adapt on an ongoing basis based on a number of factors, including the users themselves, the devices they’re operating, the apps they’re accessing, the threats that are present, their changing behavior and reputation, and the context with which they’re attempting to access data such as geolocation and type of endpoint machine. Data protection is ultimately about context. By monitoring traffic between users and applications, we can exert granular control. We can both allow and prevent data access based on a deep understanding of who the user is, what the user is trying to do, and why the user is trying to do it. This data-centric approach is the only effective way to manage risk in the modern hybrid and highly distributed enterprise. Netskope DLP is the only solution that not only can take on the heritage of legacy DLP solutions, but move the technology to the next level, a Zero Trust Data Protection solution, with innovative technologies like machine learning and UEBA. Netskope DLP is natively integrated to the comprehensive Netskope Security Service Edge (SSE) solution and delivered as a core element of SASE, enabling organizations to take advantage of a fully converged cloud-native security platform that consolidates the most vital security technologies onto a unified, integrated cloud-native platform. This approach eliminates security blind spots, provides consistency, enhances performance, and dramatically reduces the costs and complexity typical of a multi-vendor ecosystem. Customers can: • Attain greater visibility and risk mitigation across all key vectors from a single converged SSE data protection solution based on zero trust principles and state-of-the-art data protection controls. • Simplify data classification, policy definition, and incident management with a converged platform enriched by machine learning, rich reporting, and advanced analytics. • Boost end-user agility and reduce friction with flexible context-driven policies and a lightweight agent. Ultimately, a successful data protection program must coach employees and encourage safe data behavior while preserving the freedom of business decisions. User coaching must produce real-time alerts to the users about their data security violations in order to be immediately effective, not in retrospect as the users may have no memory of past events. With 10 years of investments in data protection and a long tale of industry’s first innovations, Netskope is a technology leader that is fully committed to data protection, with DLP at the core of its approach to helping businesses everywhere.

STEPS TO TAKE TO TRANSITION TO DLP

Traditional security implementations like DLP often carry the legacy of accumulated compositions from years of deployment, maintenance, patching, and expansions, like giant tumbling block towers, where all the pieces are meant to stick together. Switching from such composite infrastructures is never an easy task or an aspiration, but knowing that the value of the innovation will be substantially greater should drive the change, as organizations seek agility and scalability through their digital transformation. The transition doesn’t have to be radical and can be executed step by step. It’s also beneficial to leverage current investments that are working and build upon them. Replacing a legacy enterprise DLP in its entirety and protecting all sensitive data across every on-premises and cloud environment by means of a single data protection solution should be the end goal. To get there, it’s important to make the business case and produce security metrics for all audiences (strategic metrics for the senior leadership, operational metrics to guide the interaction with the internal security teams, and tactical metrics to direct the activities of the frontline staff). Netskope support teams can help provide guidelines to produce leading indicators, and generate compelling data points and reports. There is no “right way” to migrate to modern Netskope cloud-delivered DLP. Most organizations adopt the entire solution from the very beginning; others instead move slowly from a legacy deployment adapting and adopting the components that make sense for them first, as they move through their maturity stages. Every organization is different but generally the migration journey follows this logical course of action:

- Reassess your data protection needs. The first stage is to conduct a thorough assessment of the current technology environment, to identify and understand what data must be protected, which services and repositories are being used by the organisation to store and process sensitive information, how these services are being used by departments and individuals, etc. Here the security team needs to specifically identify and assess all corporate applications, the email services adopted and other collaboration tools, network locations, the users' hybrid work practices, the connecting devices, and the organisation’s business processes of today, such as how data is being shared among employees or with external parties. It’s important to identify and assess data-at-rest and data-in-motion activity and possibly identify the categories and the many types of data stored or processed within the scope of business. This stage can represent a “quick win” for organizations, because it can support regulatory compliance efforts, and most importantly, because it may unveil that certain portions of legacy DLP deployment are perhaps less effective now and no longer needed. For example, traditional on-premises storage discovery may not be worth the investment as before because more data is stored on emerging cloud repositories.

- Many organisations start with the cloud apps. The second stage is to determine where the highest risks are and make it a priority to mitigate those risks. Solving for new cloud data protection use cases is usually the motive and first step that leads information security organizations to transition to a modern cloud-delivered data protection solution. After all, more and more data is living across corporate SaaS applications, cloud email and IaaS today, exposed to unintentional data sharing, malicious exfiltration, and other cloud-based cyber threats. Netskope's market-leading CASB solution embeds DLP as its core component. This approach solves for both data security for corporate sanctioned cloud applications and for protection of data in-motion, especially when sensitive data is moving across unsanctioned and possibly risky apps. Choosing the right data protection vendor at this point is the most important step, because eventually organizations need to expand the solution to every environment they have for consistency. Netskope DLP is the only cloud vendor that provides that necessary hybrid coverage for all cloud channels and beyond, including on-premises data movements, because the solution includes endpoint DLP, email DLP, network DLP for web and for email, DLP for SaaS and IaaS, for private apps, etc.

- Cloud email is another starting point. The corporate migration to cloud-based email service is another opportunity to kickstart the change. Netskope provides a very extensive DLP protection for email including Microsoft 365 and Gmail via APIs, real-time email protection inline, and even data protection through personal email instances.

- Protect data in-motion through every connection. With more sensitive data now stored in the cloud and flowing everywhere, connections to corporate assets can be established directly from home and other networks without a VPN connection, from branch offices, from corporate devices as well as from personal devices and from IoT peripherals. This way, data can be accessed, uploaded, and downloaded beyond the oversight of enterprise security controls. In this scenario, a traditional “proxy-connected” DLP solution sitting in the data center partially covers data in-motion to corporate apps and to the web only when the connection happens from the main HQ offices. Netskope's intelligent SSE natively embeds the unified Netskope DLP service to secure sensitive data transactions from anywhere people work when accessing the web, the cloud, and the private applications with zero trust principles and without any hardware constrictions.18

- Protect data on employees’ endpoints. For most organizations utilizing legacy DLP solutions, endpoint DLP is fundamental. Yes, data today is stored more and more on cloud services; however, it is obvious that much sensitive data is still created on or downloaded to a corporate provisioned machine. Once the data is on the machine, it could be easily exfiltrated through USB, for example. Netskope DLP cloud service is integrated to the Netskope client and will not require deploying a separate agent on the endpoint. It is a lightweight endpoint DLP that is designed to minimize resource utilization while featuring the full suite of advanced DLP capabilities, including ML-based classifiers, OCR, File Fingerprinting, Exact Data Match, etc. It enables detection of data in-use via USB, USB device protection, and other device control policies to ensure that sensitive data is not lost or stolen whether the device is online or offline, on the corporate network, or connected from anywhere else.

- Build upon a solution that is working now. Another important consideration is that, if a recent investment was made toward native DLP capabilities on the cloud service provider (CSP) or from a specific SaaS vendor and this solution is solving present needs, a wise approach is probably to live with it in the short or medium term. But as the organization is planning to extend data protection to additional environments like multiple clouds and SaaS apps, you should consider that these environments could turn into too many consoles and disjointed sets of policies. Netskope DLP consistently protects all environments with uniform policies and via a single console.

- Evaluate and take advantage of newer capabilities offered by Netskope. Modern use cases require an updated approach to data protection. Netskope DLP expands the scope of data protection by delivering advanced data detection technologies driven by ML, superior performance and computing scale, and more accurate risk mitigation. For example, DLP is no longer a composite solution made of different components and third-party integrations (such as with a CASB), but a comprehensive service with unified policies and a single console. Certain resource-intensive detection methods like EDM, image recognition, and ML are now present even on a lightweight endpoint DLP agent, thanks to the cloud, as they were not possible in the past for endpoint DLP. Netskope today has enormously expanded the DLP computing capabilities in the cloud and can, for example, scan and fingerprint very large datasets. Most importantly, with Netskope, DLP is no longer an autonomous solution disjointed from the company security stack and unaware of business risks, but today it’s integrated into SSE and aware of business risks, behaviors, and security vulnerabilities across the entire organisation. By ingesting information about users, devices, networks, clouds, and behaviours, Netskope DLP adapts its decisions to changing conditions, minimises false positives, and produces more accurate results.

The experience built by the internal DLP practitioners (the policy admins, the incident response team, etc.) over the years with the existing legacy DLP solution is an extremely valuable asset that should be leveraged to replicate best practices and to ensure that all the technological expectations are met, including producing compliance policy profiles and establishing the proper remediation workflows. As the Netskope DLP minimises the program’s efforts, security teams can spend less time on management and frustrating incident triage, and more on substantive security activities and proactive initiatives.

.svg)

.png)